What Is Low Latency Network and Why It Matters

Table of contents

In many industries, speed is not just about performance — it directly affects outcomes. This is especially true for financial trading, gaming platforms, and real-time cloud systems, where even a small delay can change how a system behaves.

The bigger issue is not always the delay itself, but how stable it is. When response times fluctuate, systems become unpredictable. For distributed environments, this quickly turns into a reliability problem.

This article explains what latency actually means in practice, where it comes from, and what companies can realistically do to reduce it across modern network infrastructure.

What Is Network Latency

Latency describes how long it takes for data to move between two points in a network. It is measured in milliseconds and reflects how quickly systems can react to each other.

Latency should also be separated from other common network issues. Latency is the time it takes data to travel, jitter is the variation in that delay, packet loss means packets fail to arrive, and bandwidth describes how much data can be transferred over time. A network can have enough bandwidth and still perform poorly if delay, loss, or jitter become unstable.

Two measurements are commonly used:

- One-way delay — from sender to receiver

- Round-trip time (RTT) — request plus response

Most monitoring relies on RTT, but in environments like low latency trading, engineers often focus on one-way delay because it reflects execution timing more precisely. In practice, accurate one-way delay measurement requires synchronized clocks between endpoints.

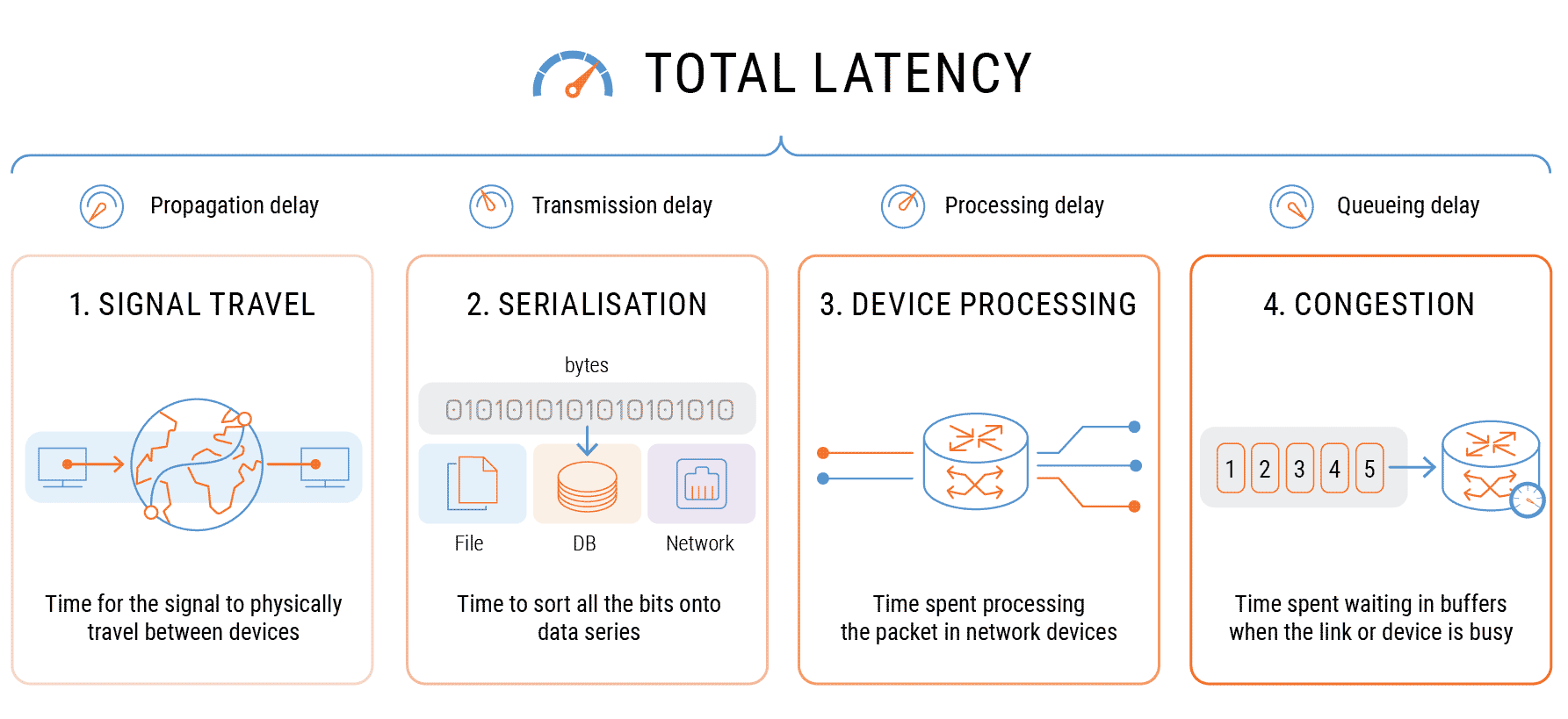

Instead of being a single value, latency is formed by several layers:

- signal travel over distance

- time needed to transmit data

- processing inside network devices

- waiting time during congestion

Even when average delay looks acceptable, instability between these layers introduces jitter. For real-time workloads, this often creates more issues than the delay itself.

What Causes High Latency

Latency does not come from one source — it builds up along the entire path.

The most common factors include:

- long physical distance between endpoints

- indirect routing paths across multiple networks

- congestion in shared infrastructure

- limited or inefficient interconnection between providers

- overloaded hardware handling traffic

On the public internet, traffic rarely takes the shortest path. Routing decisions are often based on business relationships between networks, not technical efficiency. As a result, packets can travel further than expected.

There are also less visible factors:

- asymmetric routing between directions

- short bursts of congestion (microbursts)

- bufferbloat in overloaded links

Because of this, reducing latency is not just about adding bandwidth — it requires control over routing and traffic flow.

Key Factors and Optimization Methods

| Factor | Impact | How to Improve Performance |

|---|---|---|

| Distance | Propagation delay increase | Use low latency colocation |

| Routing | Suboptimal AS-path selection | Use low latency routes |

| Congestion | Queuing + jitter | Use dedicated connectivity (MPLS, IPLC) |

| Shared infrastructure | Unstable performance | Use private or low latency VPN |

| Server location | Higher RTT | Deploy low latency dedicated servers |

| Peering quality | Extra IX hops | Optimize IP transit and direct peering |

| Bufferbloat | Tail latency spikes | Apply traffic shaping and QoS |

In practice, improvements usually come from combining several of these changes rather than relying on one fix.

Why Low Latency Matters for Business

Latency becomes critical when systems depend on timing rather than just throughput.

Low Latency Trading

In trading environments, timing determines execution results. Market data arrives continuously, and decisions must be processed immediately.

What matters most:

- how quickly data reaches the system

- how fast orders travel to execution venues

- how stable the connection remains during load

Latency influences:

- position in the order book

- ability to capture short-lived opportunities

- execution accuracy

This is why low latency trading network design focuses on the entire path instead of isolated segments.

Online Gaming Infrastructure

Games rely on constant synchronization between players and servers.

Typical characteristics:

- frequent state updates

- sensitivity to timing inconsistencies

- no retransmission in many cases (UDP traffic)

If packets arrive unevenly, gameplay becomes inconsistent. Players experience lag, even when average delay looks acceptable.

To support ultra-low latency gaming, infrastructure is usually built around:

- servers placed close to user regions

- stable routing between locations

- controlled backbone performance

Real-Time Applications and Cloud Systems

Modern applications often rely on multiple services working together.

Latency affects:

- chains of API calls

- database replication timing

- coordination between microservices

In distributed systems, small delays accumulate across multiple steps. This makes performance harder to predict as systems scale.

How to Measure Latency and Jitter

Latency is typically observed using:

- ping for round-trip delay

- traceroute to understand the path

- monitoring tools that build a network latency chart over time

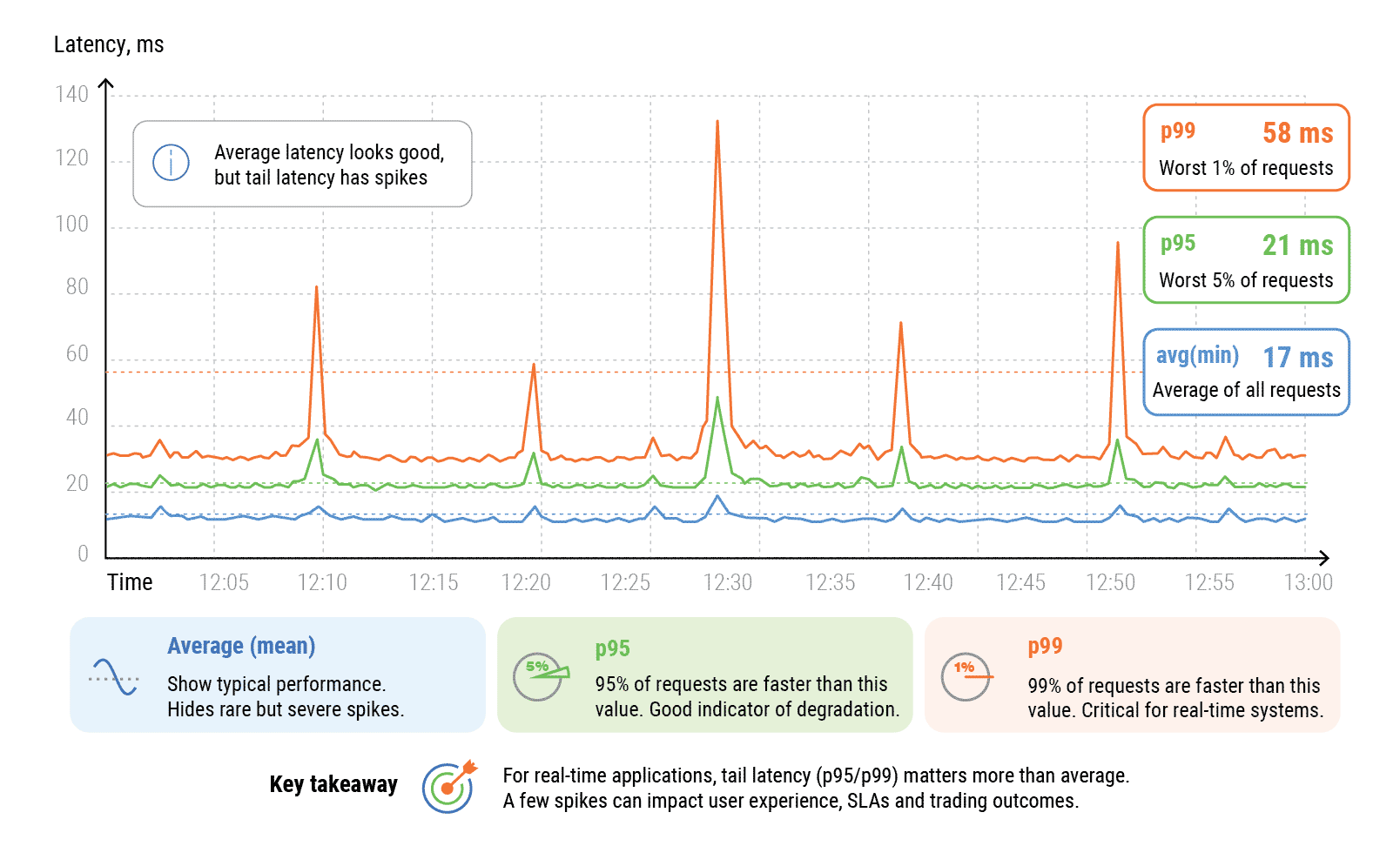

Jitter is tracked as variation between packets. High jitter usually indicates unstable routing or congestion. For real-time systems, average latency is often not enough. Engineers also watch p95 and p99 latency, because short spikes under load can matter more than normal conditions.

For production systems, continuous monitoring is necessary to maintain SLA targets and catch deviations early.

How to Achieve Low Latency

Lower latency does not come from a single change. It requires control over how traffic is routed and where systems are located.

Common approaches include:

- using low latency routes instead of default internet paths

- deploying systems in a low latency data center

- refining routing and peering policies

- monitoring performance continuously

- replacing shared infrastructure with dedicated connectivity (MPLS, IPLC)

This does not always produce the lowest possible RTT, but it usually improves route stability, reduces jitter, and makes performance more predictable under load.

Companies looking for the lowest latency internet connection usually move away from best-effort routing toward engineered network paths.

Public Internet vs Low Latency Infrastructure

| Parameter | Public Internet | Low Latency Infrastructure |

|---|---|---|

| Routing | Dynamic BGP | Engineered paths |

| Latency | Variable | Stable and predictable |

| Jitter | High | Minimal |

| Packet loss | Possible | Actively minimized |

| Tail latency | Uncontrolled | Controlled |

| SLA | Limited | Defined latency SLA |

The difference becomes most visible under load, when stability matters more than peak speed.

How IPTP Networks Builds Ultra-Low Latency Infrastructure

IPTP Networks provides low latency network services for businesses where stability and predictability are critical.

The infrastructure is built around routing control and global connectivity.

Key elements include:

- Low Latency Routes: paths optimized between financial hubs and exchange points help reduce delay and avoid unnecessary hops.

- Colocation: systems placed closer to users and exchanges reduce physical distance and improve response time.

- Dedicated Connectivity: MPLS, IPLC, and private circuits provide stable performance without shared network congestion.

- Best Path Tool: real-time analysis helps select routes based on latency and stability.

- IP Transit and Peering: multi-provider backbone improves reach and reduces dependence on congested paths.

- Monitoring and SLA Control: continuous visibility allows quick detection of anomalies and stable performance over time.

Conclusion

Latency shapes how systems behave under real conditions. For trading, gaming, and distributed applications, stability often matters as much as speed.

Reducing latency requires a combination of routing control, infrastructure placement, and continuous monitoring. With the right setup, companies can build systems that remain predictable even under load.

FAQ About Latency

–

+

+

+

+

+

+